In two previous articles, we detailed example integrations of motion control and robotics as well as peripheral motion systems that complement robotics.

Robot-related software today offers cross-compatibilities to take mechanical concepts into validated robotic motions and deployable control code. To begin work, robot-controller simulation and programming defines joint and cartesian trajectories, kinematics, motions, singularity handling, and the coordination of robotics with other workcell systems. Offline programming and CAD-to-path software converts process definitions into motion trajectories so reaches and orientations are feasible (and without collisions). It interfaces with robot motion kernels but doesn’t execute real motion.

Open communication standards are critical for multi-vendor interoperability and long-term maintainability. — Insights from the recent A3 Business Forum

The best motion-control software for servo-driven conveyors, indexing tables, gantries, lift axes, and tool changers surrounding robotics allows tuning (including autotuning — even with AI in select cases) as well as camming, gearing, and coordinated multi-axis motion.

Hardware-agnostic layers enable easier “swap-and-play” as components commoditize.

Commissioning and co-simulation software validates robot models alongside motion and safety logic to confirm timing, handshakes, interlocks, and coordinated moves with real control code — traditionally before physical commissioning. Now however, so-called physical AI is reordering the approach … so some types of virtual commissioning are beginning to yield to physical commissioning leveraging controls over actuators and machine vision. This is a hot topic in the industry right now. Additional software abounds for factory simulation and monitoring, but is beyond the focus here.

Vendors not aligning to industry protocols risk exclusion from buyer shortlists.

Finally, digital manufacturing and factory simulation software evaluates cells or lines for (among other things) throughput and cycle time. It can inform workcell arrangements and synchronization strategies. Then, operations, diagnostics, and monitoring software collects data on cycle times, faults, limits, and energy usage from robot controllers and motion drives for maintenance … and reconfiguration in some cases.

Machine vision for robotics

Where simpler solutions are insufficient or inappropriate, machine-vision systems are indispensable for many robotic workcells. That’s because they can help robots execute tasks in unstructured environments. Of course, machine vision has long been its own specialty field — and historically it was difficult to setup and calibrate for proper functioning. That was certainly true of the 2D cameras and frame-grabbers of the 1980s fitted to early industrial robots. Only under perfect lighting did they enable the simplest part location, orientation, and inspection before pick-and-place.

Especially in packaging, logistics, and flexible automation, vision is indispensable for servo-level motion feedback.

Despite advancements in resolutions, processing speeds, and 3D vision employing structured light and stereo imaging, high expertise was needed to get good performance from vision systems. Even then, the emphasis remained on inspection and quality control.

In the mid-2000s the author was invited by a vision and scanning-component supplier to come see a machine-vision installation at a local automotive engine plant. The plant engineers were extremely frustrated with the solution’s lackluster performance — especially because of how expensive that vision setup was.

Today’s machine vision hardware and software give unshakable reliability — especially for bin picking, inline inspection, and adaptive path planning. Also gone are the days of segregated vision and motion control. Now, some servo functions operate synchronously with vision and industrial communications to inform deterministic realtime trajectories. There’s realtime streaming of workpiece pose, depth, and contour data into robot and motion controllers to get actual vision-guided trajectories rather than basic offsets.

Related: Inbolt provides vision guidance in real time for new bin-picking system

AI is also prompting a new round of massive advancements — as demonstrated by the Inbolt technology here:

Traditional bin picking with machine vision uses expensive long-range 3D cameras on fixed overhead frames. Even after complex calibration and pre-calculating grasp points, systems are easily disrupted by slight lighting changes, bin moves, or issues with the predefined pick points. In contrast, AI continually perceives, understands, and adapts big-picking approaches in realtime. Leading systems in this space directly mount the 3D cameras on the robot near the end effector, so they’re closer to the objects being handled.

Non-engineering media outlets emphasize research-grade humanoid robotics with AI. However, cost-effective inspection and industrial-grade robotics abound … some with AI and advanced vision:

• Compact igus RBTX robotics with AI Vision Set.

• Standard Bots’ Core, Thor, and Bolt (AI new in 2026).

• Elephant Robotics Pro ecosystem with AI and 3D vision sets.

• Unitree Z1 Precision Robotic Arms.

• DOBOT collaborative robots with Vision Kit (AI vision).

• JAKA collaborative robots with integrated vision positioning.

• Han’s Robot Elfin with end-vision integration.

• UFactory xArm supported by a 2D/3D vision ecosystem.

• Dorna Robotics 2 with Vision Kit.

• Niryo Ned2 with Vision Set and TensorFlow.

In fact, AI is even making inroads in more modest vision solutions. The evolving “low-cost robotics” lineup from supplier igus gives engineers a comprehensive ecosystem for building whole workcells. Their AI Vision Set includes a 4k USB camera that connects to igus Robot Control software and ReBeL articulated robotic arms (or a computer for configuration). The vision app offers basic 2D contour recognition to support in-house development of custom applications.

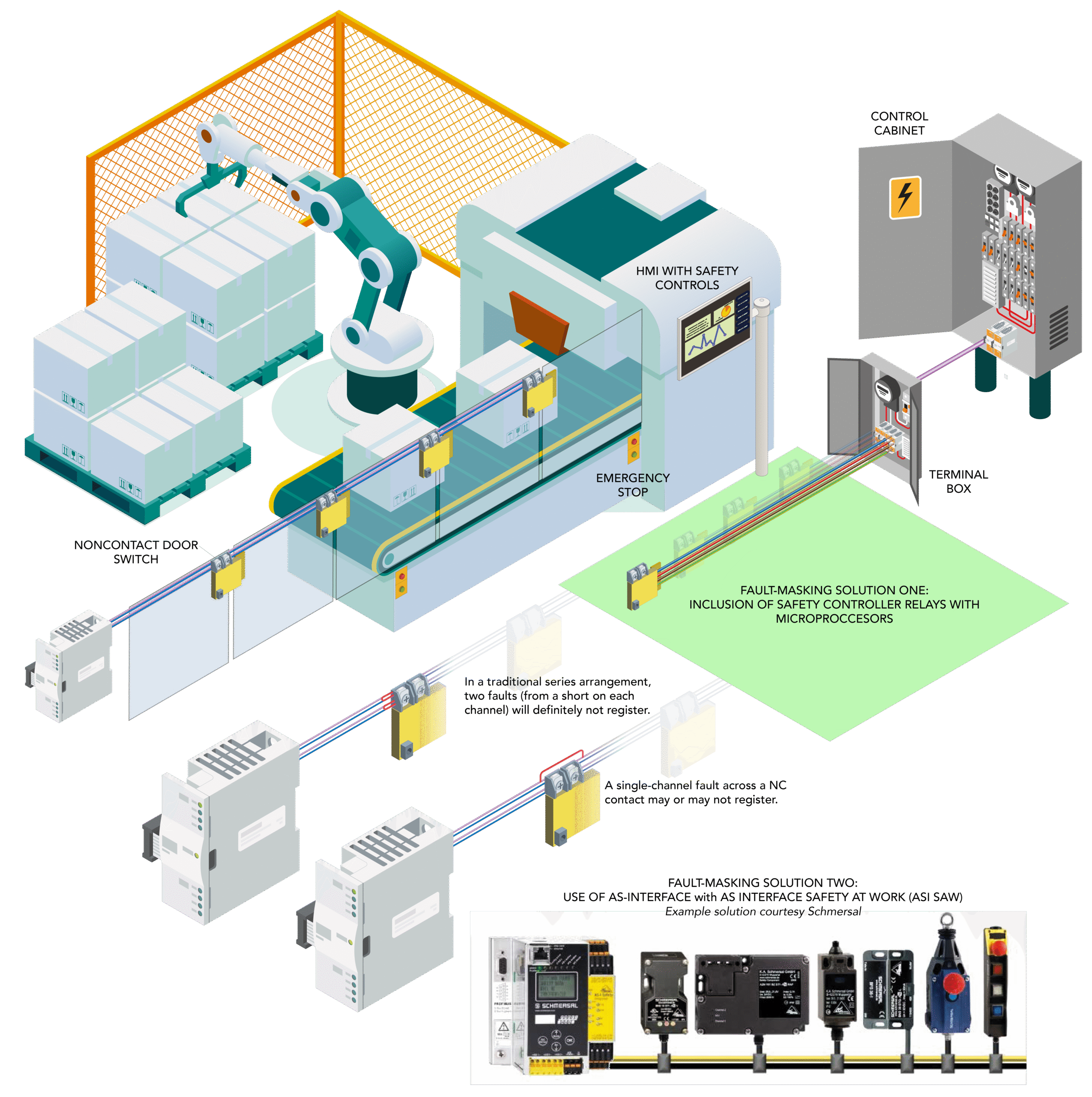

Safety is Job One with robotics

Indeed, there’s been a blurring of lines between traditional industrial robotics and collaborative robots. Robotic safety features are another aspect of this convergence. Newer systems aim to reduce reliance on gates, fences, and other physical isolation — as well as inappropriate over-reliance on emergency-stop buttons than can cause various production issues. Personnel-detecting equipment such as light curtains remains increasingly relevant here.

The latest news in robotics safety is that the Association for Advancing Automation (A3) just published in late 2025 the American National Standard for industrial robot safety requirements — to govern robots’ safe manufacture, integration, and use. ANSI/A3 R15.06 dates to 1986 … ISO 10218 (upon which the current iteration is based) dates to 2006. Basically, to unified U.S. approaches, the Robotic Industries Association (RIA) combined the ISO standard into their ANSI/RIA standard.

In 2021, A3 absorbed RIA and the management of its standards. This and the fact that the standard now comes from A3 underscores the inextricable link between motion and robotics.

So, the new 2025 ANSI/A3 R15.06-2025 and .06-3-2025 includes Parts 1 and 2 — basically, the U.S. adoption of ISO 10218-1:2025 and 2:2025. Then a Part 3 (with U.S. and Canadian input) addresses requirements not covered by ISO. The standard emphasizes risk assessments, personnel safety protocols, and technical directives for system integrators, manufacturers, and end users to maintain industrial-environment safety.

Leave a Reply

You must be logged in to post a comment.