Two terms are often used to describe the type of control that can be achieved with Industrial Ethernet networks: deterministic and real-time. Although sometimes used interchangeably, the terms deterministic and real-time refer to different, but related, characteristics of a network’s ability to transmit data or respond to events. Industrial Ethernet networks, such as EtherNet/IP, EtherCat, and SERCOS III, adapt and modify standard Ethernet in different ways to provide varying levels of real-time, deterministic control.

Deterministic: guaranteed and reliable

Wikipedia defines a deterministic system as one “in which no randomness is involved in the development of future states of the system.” In other words, for a given initial state, a deterministic system will always return the same output or reach the same future state.

In the context of Industrial Ethernet, deterministic communication is the ability of the network to guarantee that an event will occur (or a message will be transmitted) in a specified, predictable period of time — not faster or slower. This is sometimes referred to as a “bounded response.” An application is considered deterministic if its timing can be guaranteed within a certain margin of error. Determinism provides a measure of reliability that the communication or output will not only be correct, but will happen in a specified time.

Standard Ethernet networks are probabilistic — the network’s operation relies on the assumption that nodes (devices) will probably not transmit at same time. If (or, more correctly, when) two nodes attempt to transmit at the same time, this is referred to as a collision. To handle collisions, Ethernet uses CSMA-CD (Carrier Sense Multiple Access-Collision Detection), which puts responsibility on each node to detect a collision and retransmit its data if a collision occurs. Before the node attempts to retransmit its data, it pauses for a random period of time, referred to as a “backoff delay.” This reduces, but does not eliminate, the chance of a subsequent collision.

Image credit: Analog Devices, Inc.

Real-time: on time, every time

For a system to be considered real-time, it must specify a maximum time in which the system responds to an event or transmits a message (data packet). A non-real-time system, on the other hand, is one that runs at a consistent speed, with no deadline. It’s important to note that determinism is a defining quality of a real-time system.

Real-time communication is typically required for event response and closed-loop motion control applications, but there are two types of real-time control: hard real-time and soft real-time, and it’s important to distinguish between them.

Hard real-time means that not a single deadline can be missed. There is an absolute limit on the response time, or else the system will experience a failure or exception. Soft real-time is less strict. There is a specified cycle time, but occasional violations are tolerated. Note that the designations of hard real-time and soft real-time do not depend on the length of the time limit or on the consequence of missing the deadline.

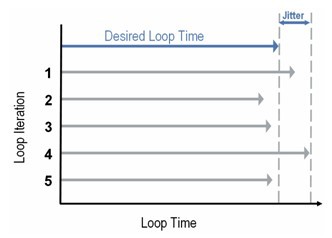

Latency and jitter in real-time control

Image credit: National Instruments

There are two additional terms you should be familiar with when discussing real-time, deterministic control: latency and jitter.

Latency refers to the amount of time between an event and the system’s response to that event. In real-time networks, latency should be normalized. In other words, it should be a known, consistent, predictable quantity. Latency should generally also be low, but having a known, consistent amount of latency is more important, in most cases, than having absolute minimal latency.

Jitter is a measure of how much a response (or update) time deviates from the upper limit, across multiple iterations of an event. In other words, jitter is the fluctuation in latency over subsequent occurrences of an event. Low jitter is important in motion control applications where the output being controlled is critical to the process, such as the movement of an actuator or the opening and closing of a valve.

Leave a Reply

You must be logged in to post a comment.