Recently, our regular contributor Steve Meyer spoke to us about the history of motion control and how PC-based control came to be. Here’s what he said.

In the Industrial Revolution, machinery was mechanical.

Production looms for weaving fabrics and floor coverings were programmed with mechanical memory in the form of hard stops and pins to make sizes of weave and pattern repeatable. So, the notion of automatic machinery is ambiguous when considered in the context of the last few hundred years.

Electronic controls were first designed about 70 years ago, when John Parson pioneered production manufacture of Sikorsky helicopter blades. His efforts to use early computer technology — to cut more precise aerodynamic shapes for the blade profile — led directly to the creation of the Computer Numerically Controlled (CNC) machine tools.

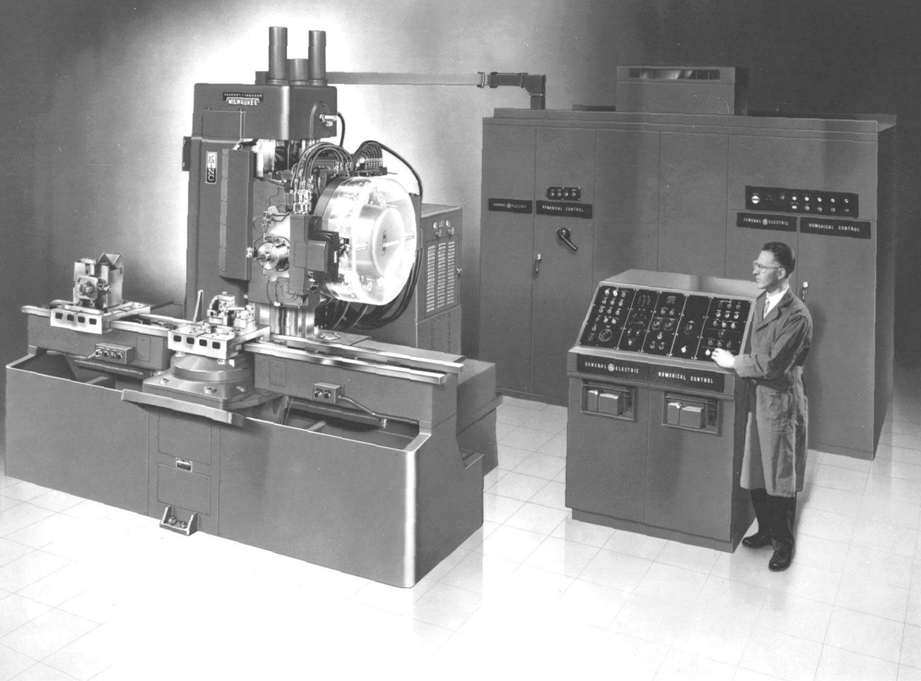

1950s: CNC machining makes automated controls a reality

By the early 1950s, the controls became the standard of manufacturing for companies including General Electric, Giddings and Lewis and others. In those days, the vacuum-tube based control system was larger than the machine it controlled. That was the state-of-the art in computer electronics at the time.

To put that in perspective, the computers in many people’s pockets that make phone calls, receive email, search the Internet and play games have 10,000 times the computing power of the room-full of controls required to run a machine tool back in 1952.

Ferrite-core memory was still new and the early prototypes cost $200 per kilobyte of memory. Programming was done on a seven-column punch tape read with lights and diodes. Coding was verified by watching the tool move before running parts and many iterations of the tape program had to be edited until the program could be verified. More after the jump.

Compared with manual machining of parts, CNC was clearly a huge improvement especially to manufacturers such as General Motors. Automotive and Aerospace manufacturers are still the largest consumers of CNC equipment. But other areas of manufacturing needed attention as well. GM issued a request for proposal for a control solution that would replace the massive relay cabinets used on the plant floor. With the advent of transistor logic, solid-state memory, and the ability to program with CRT workstations, all the pieces were available for Dick Morely’s team to create the Programmable Controller in 1968.

The Third Industrial Revolution, Automation, was about to begin

At about the same time came distributed control systems (DCSs) with unique control requirements for many individual analog controllers employing PID algorithms and sequential control.

DCSs, also called SCADA systems, ran chemical processes, nuclear power plants, and steel mills. These applications have common requirements for precise control over hours to days. So here, with an array of minicomputers and engineered communications networks, the Texaco Port Arthur petrochemical refinery installed the first “modern” DCS in 1959. Honeywell launched its DCS product for process automation in the early 1960s.

Naturally, CNC, PLC and DCS are separate disciplines with distinct modes of application modeling.

So during this period of control development, their fundamental difference necessitated creation of unique hardware and software. One controls’ subsystems were inapplicable to the others.

Related FAQ: What are the hardware and software parts of a PC-based motion controller

Nowadays, industrial-control manufacturers benefit from computer processor power, memory and communications that offer almost boundless capabilities. It’s true that for those without direct experiences of historical control technologies, the existence of today’s disparate range of programming and hardware is confusing. But in reality, any of today’s processors (including those in PC-based controls) are capable of performing CNC, Motion, PLC or DCS functions. They are different ‘families’ of control based on their unique programming and tasking. So it may appear as a distinction without difference, but the difference is there when it comes to application.

Leave a Reply

You must be logged in to post a comment.